Contents:

Social engineering is a term that first emerged in social sciences, somewhat akin to the direct intervention of scientists on human society. The term ‘social engineer’ was first coined in 1894 by Van Marken, in order to highlight the idea that for handling human problems, professionals were needed. Just like you can’t solve technical issues without the proper skills training, you can’t solve social issues without similar skills.

What Is Social Engineering in Cybersecurity?

In the context of information security, social engineering is the act of using people’s naturally sociable character in order to trick or manipulate them into exposing private or confidential information that may be used in fraudulent activities, spreading malware, or giving access to restricted systems.

All scams which rely on people’s instinctive desire to be helpful, kind, or to submit to authority fall under the encompassing umbrella of social engineering.

The term was popularized in the IT niche by Kevin Mitnick, a world-famous hacker, active in the 90s, and later-day security researcher and author of the book ‘The Art of Deception’.

An alternative definition of social engineering, by CSO, is that of the art of gaining access to buildings, systems, or data by exploiting human psychology, rather than by breaking in or using technical hacking techniques.

Any IT attack which needs to exploit human weakness, alone or in addition to any technical vulnerabilities, can be classified as a social engineering attack.

What Is a Social Engineering Attack and How Does It Work?

Now that we’ve covered what is social engineering, let’s see what a social engineering attack is, how it works, and what are the main types of social engineering attacks.

How Social Engineering Attacks Work

Any IT attack which can be qualified as a social engineering attack must use psychological manipulation in order to convince users to make security mistakes.

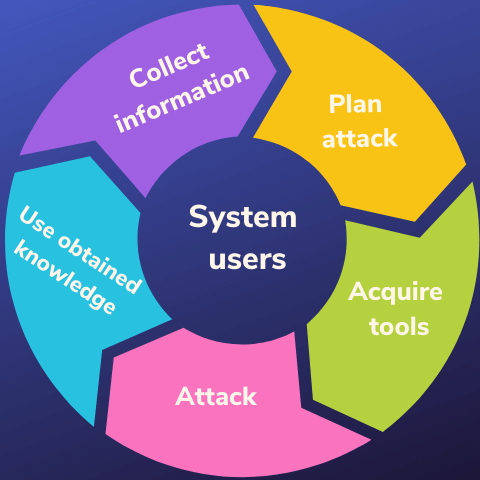

Social engineering attack cycle.

This psychological manipulation needed for a social engineering attack can take many forms:

- The pretext: the attacker pretends to contact for something innocent, in order to establish a conversation and build a friendly relationship. They will ask for more sensitive info at a later date.

- Baiting: the attackers will bait the victim into doing something unsafe for the allure of something they desire (for example, attackers can leave an infected USB stick lying around with the label ‘Secret team bonuses’).

- Tailgating: in this type of attack, the attacker will just follow the authorized person into a restricted area by simply tailing along, looking as relaxed and as ‘belonging’ as possible. While the following part takes place in the physical world, the ultimate goal of the attack is IT-related (for example, the attackers gain entry to the server room).

- Impersonating: the attacker pretends to be someone else, someone with the required authority to make demands (fake tech support scams and CEO fraud or BEC attacks are common forms).

- Quid pro quo: the attacker offers you something and asks for something in return, hiding the maliciousness of their intents (for example, they ask for a password in exchange for a cash reward, claiming to be an IT researcher doing a case study).

- Inducing fear: for example, the attackers pretend to be a 3rd party alerting you to some danger (someone hacked your account, for instance) and ask for your password in order to help solve the problem.

Types of Social Engineering Attacks

Some common forms of social engineering attacks that you’ve definitely heard of are these:

- CEO fraud (the attackers pretend to be your boss or a figure of authority asking for you to perform a task or give them access to sensitive info);

- Phishing (you get an email or some other form of communication claiming to be from a 3rd source party you trust, like Google, Facebook, Outlook, Netflix, Steam, whatever, and prompting you to enter your login details to proceed);

- Vishing (voice phishing, usually over the phone);

- Spear phishing (also known as whaling) and rose phishing (a more dedicated type of phishing involving a period of research done on a specific victim in order to have a customized approach);

- Fake applications or messages with infected attachments – the attackers act as if the victim requested the message and its attachment, but when the attachment is opened, this allows the malware to get in.

Social Engineering Attacks Examples

Now that we’ve established what is social engineering and how these attacks work, let’s take a look at famous examples of social engineering hacks. You’ll see that it can happen to anyone, regardless of how big or small. One moment of keeping your guard down can be all that’s needed for hackers to infiltrate your systems.

AIDS or the original ransomware

The original ransomware virus was a virus named AIDS which acted as a Trojan. It was actually released at a scientific conference by an evolutionary biology researcher who claimed he wanted to raise awareness and money for treating the AIDS disease. Basically, this Trojan virus is the grandfather of all ransomware codes which followed afterward.

It spread via a floppy disk and got emailed further among the early victims only because people believed they were receiving information on how to help AIDS relief effort. In this sense, the original ransomware infection also counts as a social engineering attack, since it preyed on people’s desire to help.

Kevin Mitnick’s attack on DEC

Kevin Mitnick, the hacker expert on social engineering (later on turned security researcher) made a name for himself in the 90s precisely through his attack on Digital Equipment Corporation (DEC). Because he was in league with some hackers who wanted to take a look at DEC’s OS system but who said they can’t get in without credentials, Mitnick simply phoned and asked for them.

Kevin Mitnick called the system manager from DEC and claimed to be an employee from the developing team, temporarily locked out of his account. The sysadmin promptly gave him a new username and password with a high access level in the organization.

HP’s internal struggles with employees

In 2005 and 2006, Hewlett-Packard was undergoing some reputational issues with leaked info in the media. In an effort to find out which employees are leaking info, the company hired private investigators, who managed to get the personal call records from AT&T for their targets by claiming they were them. The practice has been deemed illegal by federal law following the scandal, but it definitely breached new horizons in social engineering techniques.

The 2015 Yahoo hack

This huge hack which resulted in hackers gaining the personal info of over 500 million users in 2014 relied on spearphishing. By targeting only a few high-level Yahoo employees with tailored messages, the hackers managed to gain access into the Yahoo IT servers. From thereon, the attackers used cryptography and fake cookies to break into the accounts of regular Yahoo users.

The 2016 US Department of Justice (DOJ) hack

Not even the US Department of Justice is exempt from social engineering attacks. As long as humans work there, they can fall prey to social engineering tactics. A frustrated hacker, seeing that he can’t infiltrate the systems any further without an access code, simply called and claimed to be an employee. In other words, he pulled a Mitnick on them. After obtaining the code, the attacker was able to proceed and infiltrate internal communications. He leaked some privileged info as proof of the hack, prompting the DOJ to consider its security more seriously.

The infamous Cambridge Analytica-Facebook scandal

In a very complex way based on building social profiles from every bit of information to be found on people, the advertising firm Cambridge Analytica was accused of successfully manipulating the US presidential elections and the UK referendum on Brexit, aided by Facebook insiders.

This scandal showed everyone that social engineering hacks can sometimes hit very close to the mark of the term’s original meaning (that of changing society).

Ubiquiti Networks BEC attack

The Ubiquiti networks were infiltrated in 2015 by a simple Business Email Compromise (BEC) attack, leading hackers to steal $46.7 million. It was as simple as pretending to be high-level managers contacting the employees in the financial department and requesting a transaction to be made.

#The RSA’s breach of 2FA

The SecurID tokens for two-factor authentication from the RSA were widely considered almost unbreakable. But that all changed in 2011, when people inside the company notoriously succumbed to phishing, exposing the company’s data and losing $66 million in the process.

Initially, RSA employees received a fake email claiming to be from the recruiting party of another company. The email contained a malicious Excel sheet attached to the message. If the attachment was opened, a zero-day Flash vulnerability allowed the hackers to install a backdoor Trojan into the infected computer.

While the seriousness of the breach could not be properly assessed, RSA researchers believed it might have affected the security of their 2FA token technology, hence having to redo it from scratch. In the end, this is where the losses went – into the effort of rebuilding a technology everyone previously thought was impenetrable.

The Target breach of 2013

The Point of Sale system from Target was breached by hackers in 2013, managing to expose and steal the credit card info of over 40 million Target customers. While the info was stolen by a malware script, the entry point for this malware was a clever bout of social engineering. Knowing that it would be easier to target a smaller fish first, the attackers breached the security of Fazio Mechanical Services via phishing.

This 3rd party supplier had previously been given access to Target’s systems, so by falling prey to hackers, the attackers could now move towards attacking Target, the real prize.

The Badir brothers

In the 90s, the three Badir brothers were the most extraordinary hackers plaguing the Middle East. Their story is fascinating: all three were blind but with a remarkable talent for hacking. It’s wasn’t all technical know-how, but also social engineering prowess.

They could imitate all voices on the phone (including the voice of the investigator who was on to them), as well as be able to guess the PIN number of a victim simply by hearing it typed in. They would also chat up secretaries of high-level enterprise bosses, being all charming and asking for details about the men these employees worked for. This led them to find out personal details and successfully guess the passwords of these executives.

All these skills helped them carry out a long series of successful social engineering attacks all over the Middle East.

How to Protect Against Social Engineering Attacks

Unfortunately, precisely because social engineering attacks rely on people’s trust, it’s hard to protect against them through technical means alone. That is exactly what social engineering is: tricking and manipulating people into helping the hackers circumvent technical protections in place.

There’s both good news and bad news about this. The bad news is that no matter how fantastic your cybersecurity suite is, you can still get easily hacked via social engineering attacks if you place your trust in the wrong people.

The good news is that as long as you keep yourself and every employee in your organization informed on what is social engineering and the latest techniques, you will not be vulnerable.

There’s only one good counter for social engineering: awareness and cybersecurity education. Here is where you can start on cybersecurity education if you are a regular user.

If you are in charge of an organization, take a few extra steps:

- reduce and control admin rights among regular users;

- create frequent cybersecurity training sessions and spread awareness;

- perform staff exercises and get penetration testing;

- prepare for incident management as well, not just prevention;

- activate your spam filter. Because email is used for a lot of social engineering, the easiest way to protect against it is to block spam from reaching your inbox;

- don’t respond to scammers. By doing so, you demonstrate that your email address is legitimate, and they will simply send you more;

- have a robust cybersecurity solution in place.

How Can Heimdal™ Help?

Our Heimdal™ Privileged Access Management allows administrators to manage user permissions easily. Your system admins will be able to approve or deny user requests from anywhere or set up an automated flow from the centralized dashboard. Furthermore, Heimdal Privileged Access Management is the only PAM solution on the market that automatically de-escalates on threat detection. Combine it also with our Application Control module, which lets you perform application execution approval or denial or live session customization to further ensure business safety.

Managing user permissions and their access levels is not only a matter of saving the time of your employees but a crucial cybersecurity infrastructure project.

Heimdal® Privileged Access Management

- Automate the elevation of admin rights on request;

- Approve or reject escalations with one click;

- Provide a full audit trail into user behavior;

- Automatically de-escalate on infection;

I hope you now understand more about what is social engineering and you’ll be able to recognize an attempted social hack. Keep reading more about cybersecurity and be on your guard.

This article was originally published by Miriam Cihodariu in August 2019 and was updated by Antonia Din in March 2022.

Network Security

Network Security

Vulnerability Management

Vulnerability Management

Privileged Access Management

Privileged Access Management  Endpoint Security

Endpoint Security

Threat Hunting

Threat Hunting

Unified Endpoint Management

Unified Endpoint Management

Email & Collaboration Security

Email & Collaboration Security