Contents:

Virtual Reality (VR) and Augmented Reality (AR) are some popular terms, yet they aren’t new at all. In fact, they’ve been around for a few decades now. In the future, they will probably be used widely, for both recreational and professional purposes. The global market size of VR and AR was valued at around $26.7 billion in 2018 and is expected to reach approximately $814.7 billion by 2025.

Even though today these technologies don’t pose so many security and privacy risks, in the next few years, as their popularity increases, they could be prone to real threats.

In this article, first, I’ll walk you through a brief history of these technologies and then I’ll look at some potential security and privacy issues for VR and AR. Then, I will give you a few key pieces of advice so you can stay safe.

Virtual Reality Explained

To put it simply, virtual reality devices and programs create artificial environments generated by a computer. They can be experienced by an individual through an interface, most commonly a headset, instead of watching content on a display. The purpose of VR systems is to trick the brain into believing the viewed content is as real as possible.

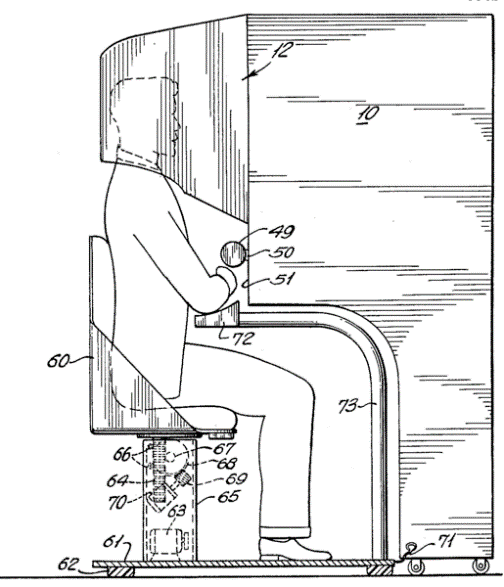

The origins of VR can be traced back to the late 1950s when filmmaker Morton Heilig designed the Sensorama. This was an arcade-style cabinet, with a 3D display, stereo sound, vibrating seat, scent creator, and even a fan to simulate the blowing wind. But Heilig’s invention was ahead of its time and didn’t prove to be successful in that period.

Morton Heilig’s Sensorama device, Figure 5 of U.S. Patent #3050870

Throughout the years, many experiments were made in this industry and some quite remarkable VR equipment was produced.

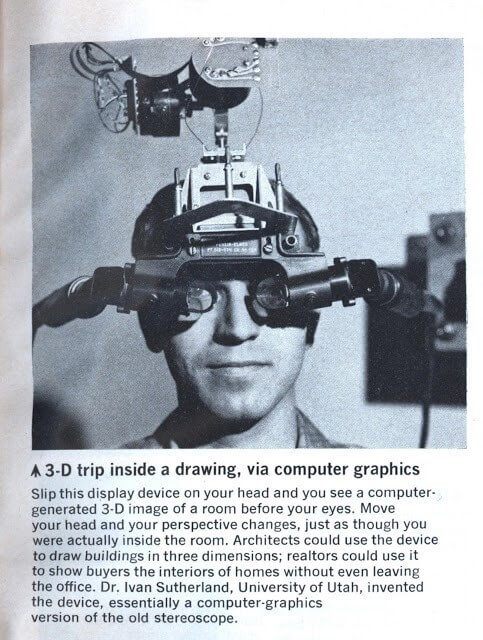

Here is the first actual VR head-mounted display that was created by computer scientist Ivan Sutherland in 1968:

Ivan Sutherland’s VR Headset, 1968

And check out this headset developed by NASA in 1990, that is also accompanied by accessories like gloves and a suite:

NASA’s Virtual Interface Environment Workstation (VIEW), 1990

If you’d like to read more about the history of VR systems, I suggest you check out this resource:

What about Augmented Reality?

AR overlays graphics onto one’s view of the real world, as opposed to VR, which tries to immerse the user into a virtual land.

The origins of AR are also tracing back to Sutherland’s 1968 creation I mentioned above.

Another important milestone in the history of AR is Myron Krueger’s “Videoplace”, a project which started out in the mid- 1970’s and continued for many years. His creation was used to project the silhouettes of several people located in different rooms, no matter if they were present in the same building or on opposite sides of the world. Their “shadows” could interact with one another, creating an experience never witnessed before.

For more AR goodness, access this article on the history of augmented reality (Infographic included):

But what are the security and privacy risks of AR and VR?

#1. Eye Tracking

Some are saying that eye tracking in VR will be a game changer. Why? Because it improves accuracy and the user experience and facilitates dynamic focus or understanding the players’ emotions so developers can craft better VR experiences. Others are also thinking that eye tracking in VR will even increase the security in these systems, in the sense that eye scanning can be used as a biometric identifier, enabling users to safely log in using this method.

But does gaze tracking have anything else to do with VR besides the monitoring done for the purpose of playing games and other similar activities? Well, it could have. For instance, advertisements could be easily included in VR games, just like they are displayed in regular mobile games. Your interest in a certain brand would be expressed much better than through the traditional clicking on an online banner – advertisers could be able to see more accurately how you perceive an ad.

According to Gerald Zaltman, 95% of purchase decisions happen in the subconscious mind. And one of the best ways for marketers to take a peek into the unconscious of consumers is by using eye tracking technologies. This way, market researchers can literally see through their customers’ eyes.

But as exciting as this may seem for marketing professionals, as scary it may get for the ones observed.

#2. Blackmailing / Sextorsion

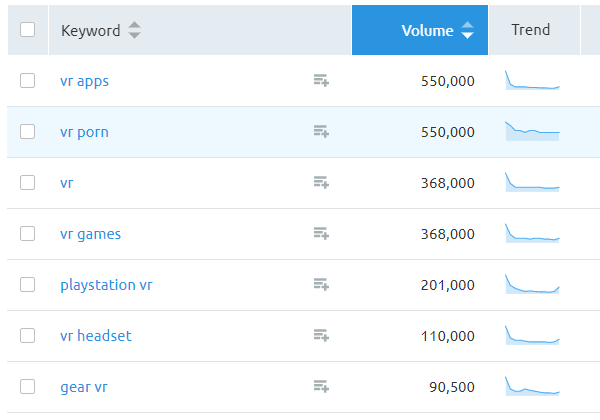

“VR Porn” is the number 1 search term associated with virtual reality (alongside “VR Apps”).

Source: Semrush search

But it’s no secret that the VR adult entertainment has become extremely popular. Actually, the global VR adult entertainment market is expected to reach $1 billion by 2025.

Malicious actors may take advantage of the industry’s popularity and resort to sextorsion. This means they would try to trick you into believing they have proof you’ve visited adult websites and urge you to pay them so they don’t leak the content. Sometimes, they would even attach a password you’ve been using, which was stolen from a data breach, to make the email scam look more legitimate. But don’t worry, even though it can be an unpleasant experience to receive such an email, keep in mind this is fake.

But things can actually get worse. You could have spyware installed on your computer which records your browsing habits and everything you do on your computer. Thus, hackers could know the type of content you have searched for and use it against you. As a general rule, try not to click on ads while browsing adult websites.

Here is a complete guide we put in place for safely browsing adult content websites:

#3. Altering reality

You know how the saying goes – Seeing is believing.

Let’s picture a future where you constantly have an AR device attached to your head, with different images overlapping with the real world. Think Google Glass, a gadget which actually failed for general consumers when it was released a few years ago and is now being pushed to businesses. But this doesn’t mean that in the future, this kind of devices will never gain traction with regular consumers. In fact, there are other companies that are already selling similar solutions, trying to build hype around this technology.

Right now, AR headsets only display a reflection of your smartphone’s screen on the lenses. But eventually, things could get so much more realistic and potentially turn into something bad.

Here is a highly interesting read for dystopian sci-fi enthusiasts like myself, written by David Gewirtz, where he pictures a world dominated by Augmented Reality. There, AR glasses can display a visual indicator of the temperature of your cooking pan. They can sense if you’re low on certain kitchen supplies and order more for you. They are able to simulate an experience where you are in a movie theater when instead you are watching a film on your 42-inch TV. Owners are able to alter their looks, removing facial imperfections or show themselves as being thinner to other AR glasses users.

But the more popular a technology becomes, problems also arise. In Gewirtz’s world, adult movies vendors side-load apps onto the smart glasses platform, which allow users to make people look like someone else – for instance like a celebrity, or appear naked. Gender or race change hacks also become available. The appearance of violence and other gruesome details can also be shown. Malicious actors trick drivers into thinking a road went straight when, actually, there is a tight curve coming up, causing serious accidents.

Fortunately, these are just some imaginary scenarios for now. But how far are we from this kind of reality?

#4. Fake identities / Deepfakes

Advances in facial recognition and machine learning now allow the manipulation of voices and appearances of people, resulting in what may look like genuine footage. In short, this is what the deepfake technology is based on.

For instance, look at this deepfake video of Mark Zuckerberg:

Or at this one of Kim Kardashian:

And at this video of Rasputin performing Beyonce’s “Halo”:

Of course, you can easily spot these as fake. But soon enough, through the power of tracking sensors found in VR systems, deepfakes could get much more convincing. Motion tracking sensors could record the movement of someone and use it against them to create digital replicas.

In fact, the sensors inside VR headsets could record the facial movements of individuals so well, that deepfakes will look so real that they could fool absolutely anyone.

Do you think this sounds like science fiction? Well, Facebook is currently working to make VR avatars look and move exactly like you.

In VR settings, people are now interacting with each other via avatars. The tracking sensors in VR systems are what translate our real-life hand and head movements into the movements of our virtual personas. But due to technical constraints, these virtual images of us are essentially simple, even caricature-like and use facial expressions that inaccurately represent what we’re actually doing with our faces.

Yet, advancements won’t stop here.

Here is what Yaser Sheik, Director of Facebook Reality Labs, has shared:

The real promise of VR […] is that instead of flying to meet me in person, you could put on a headset and have this exact conversation that we’re having right now—not a cartoon version of you or an ogre version of me, but looking the way you do, moving the way you do, sounding the way you do. – Yaser Sheik, via Wired

![]()

Source: Wired.com

In the future, Sheikh and his team are also planning to extend the face scan to the whole body to make VR meetings as realistic as possible.

As fascinating as it may sound from a tech perspective, from a cybersecurity point of view this is quite frightening. These VR systems would need rock-solid security measures in place because this way your identity could easily end up in the wrong hands.

What you can do to stay safe when using VR and AR systems (for now)

#1. Don’t disclose information that is too personal or unnecessary

Do not share anything that you don’t actually need to, for example, don’t share your payment information unless you are actually purchasing something.

#2. Review privacy policies.

Yes, this task can be daunting, as these materials are quite lengthy. But do your best to find out how the companies behind the platforms you create accounts on store your data, and what they do with it. For instance, are they sharing your data with third-parties? What kind of data are they sharing and collecting?

#3. Make sure you are using the Internet safely.

One way to keep your identity and data private on the web is by using a VPN service.

Also, if you join any VR and AR online communities, please be careful what websites you end up on. You should also be using a proactive internet security solution, that makes sure every link you click is safe and not infected with malware.

Tying it all together

It’s certain that AR and VR provide so many great opportunities to facilitate research and enhance users’ experience. Yet, we should be extremely cautious about the potential harm they could bring along and also be aware of the privacy risks involved.

What’s your take on AR and VR? Do you think these technologies are risky? Let me know in the comments section below.

Network Security

Network Security

Vulnerability Management

Vulnerability Management

Privileged Access Management

Privileged Access Management  Endpoint Security

Endpoint Security

Threat Hunting

Threat Hunting

Unified Endpoint Management

Unified Endpoint Management

Email & Collaboration Security

Email & Collaboration Security